Overclocking Intel’s Xeon E5620: Quad-Core 32 nm At 4+ GHz

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Overclocking Intel’s Xeon

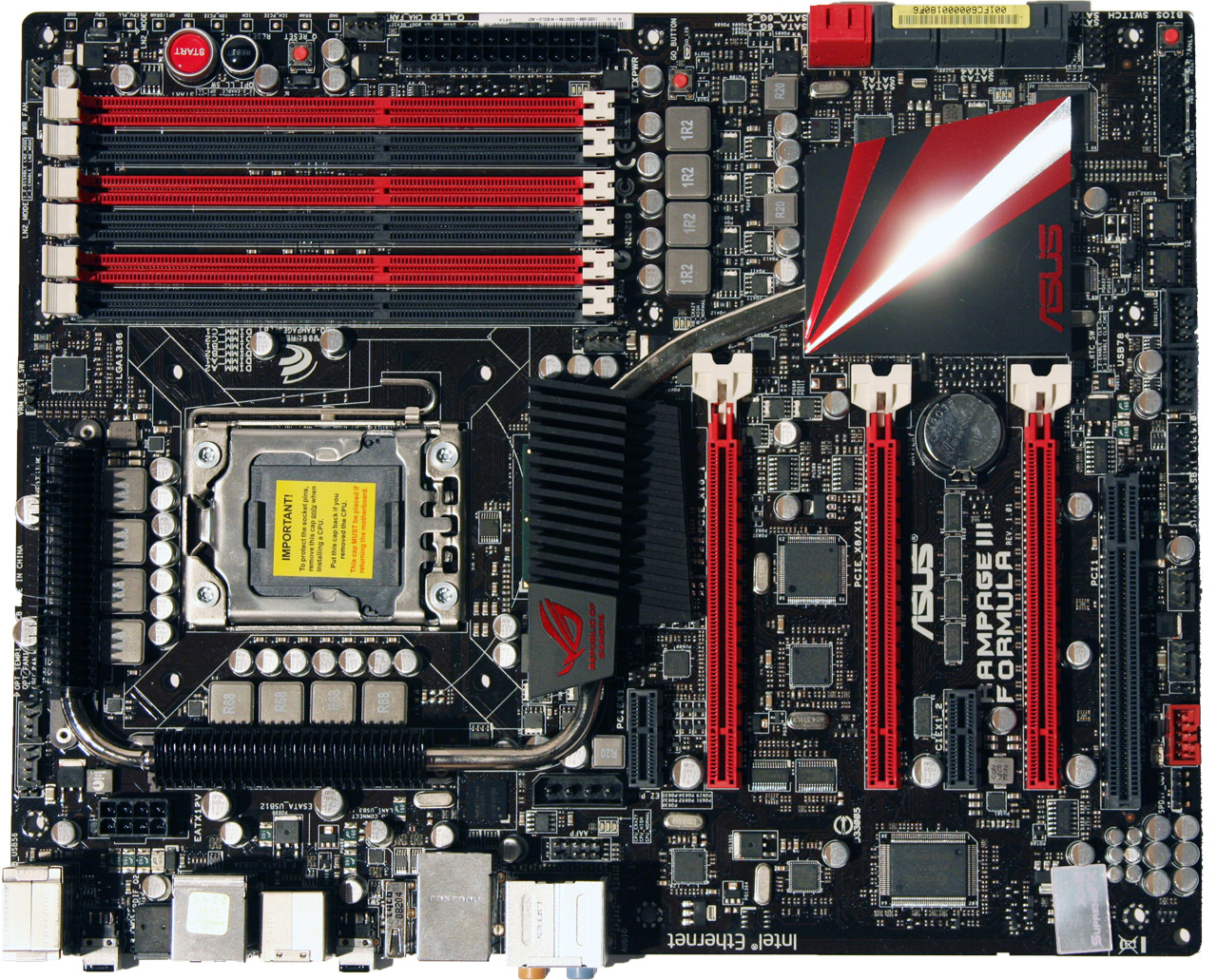

The first thing to remember about dropping a 2P processor in a desktop platform is that not every motherboard recognizes Xeon CPUs. Asus’ Rampage III Formula does (the company says it tries to give all of its relevant RoG platforms this capability). I’ve also heard good things about certain EVGA platforms, though I don’t have any of the company’s motherboards in-house to cross-check. Should you decide to follow this path, double-check that your board will, in fact, take a Xeon processor.

Limitations

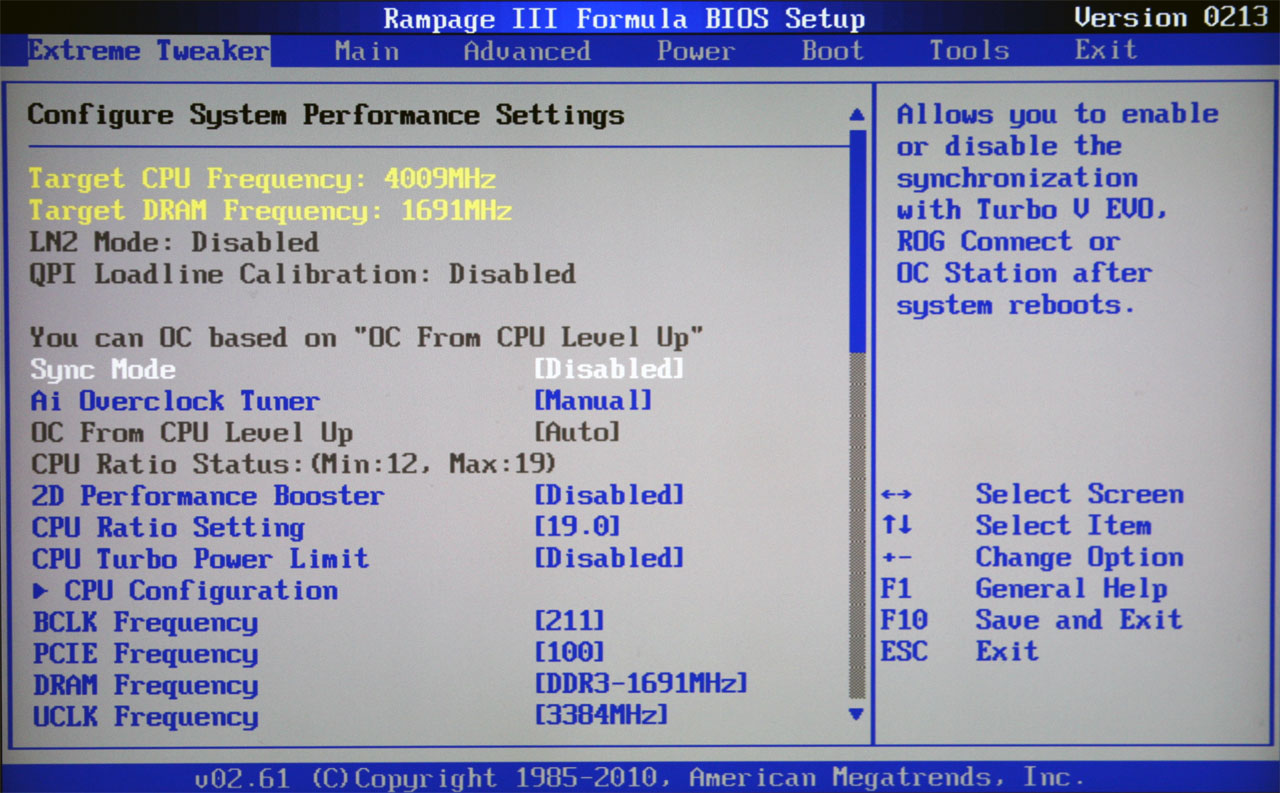

Now, right upfront, we know that there is a practical limit where Intel’s reference clock, known as the BCLK, gets hung up. That ceiling is generally in the 220 MHz range. Multiply that number by the E5620’s highest supported ratio, 19x, and you get a fairly feasible 4.18 GHz operating frequency. That’d be nearly a 1.8 GHz overclock—not bad…not bad at all.

Article continues belowRemember, though, that upping the BCLK from 133 to 220 MHz throws a lot of other frequencies out of whack. Intel arms the Xeon with a handful of divisors to help narrow the range of clock rates you can use, but they’re fairly limited. For example, you’ll only want to use the 800 or 1066 MT/s memory ratios. Similarly, QPI needs to be set to 4.8 or 5.86 GT/s. Although the Asus Rampage III Formula motherboard I used for this experiment supports more aggressive settings, using them prevents the platform from booting. Not that we would have wanted to anyway—setting each field to the lowest possible value gives us the most headroom for an aggressive overclock.

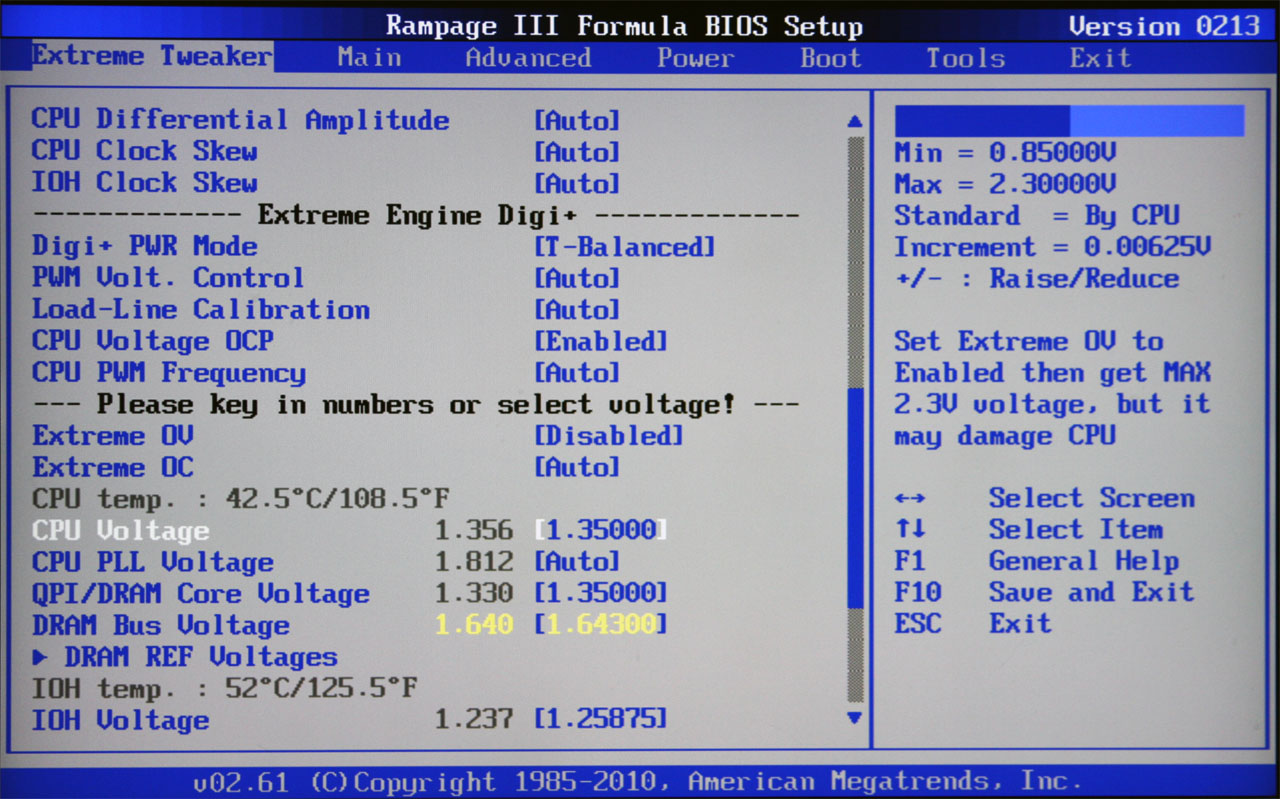

As you start pushing frequencies other than the core clock beyond their specifications, it often becomes necessary to goose voltage levels, too. This will almost always be the case for Intel’s Xeon E5620, Core i7-930, or Core i7-970—the three CPUs on our bench today.

Working Around Them

A 221 MHz BCLK setting was already pushing my Xeon E5620 sample fairly hard for a 4.2 GHz clock rate. Even with the lowest DDR3-800 and 4.8 GT/s ratios set, I was forcing a 7976 MT/s QPI rate. This was actually doable with a 1.4 V CPU voltage, 1.425 V QPI/DRAM voltage, and 1.35 V IOH voltage. Those sound fairly high, but our Xeon processor handled them well, never exceeding 75 degrees Celsius with eight threads active in Prime95.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Dialing in 4.3 GHz required a 226 MHz BCLK setting—well beyond where this board wanted to go. That was an 8156 MT/s QPI data rate, with memory clocked at DDR3-1359 (not a problem for my 2000 MT/s Patriot Sector 7 kit), and a 3625 MHz uncore frequency. At this point, I had to pull out a couple of tricks. An increased PCIe clock (110 MHz) was needed to even boot up. Moreover, the QPI Link Data Rate had to be set to Slow Mode or, again, the machine simply wouldn’t boot. Be careful with PCIe voltage adjustments, though. After bumping up the PCIe frequency and IOH/ICH PCIe voltages, I fried the onboard Intel gigabit Ethernet controller. It simply wouldn't show up in Windows afterward.

Cranking the BCLK up to 231 MHz, yielding 4.4 GHz, might have even been viable. Unfortunately, no combination of voltages, differential amplitudes, or clock skews could lock in stability with an 8337 MT/s QPI data rate. Had this CPU been unlocked, though, I’m confident it would have handled 4.4 GHz without a problem.

My goal wasn’t to find the most extraneous settings possible before popping a processor, though. So I dialed things back a bit for this comparison.

-

intelx i wish it had higher multiplier it would of been a great processor to recommend than paying $10000 for the i7 970.Reply -

JOSHSKORN I wonder if it's possible and also if it'd be useful to do a test of various server configurations for game hosting. Say for instance we want to build a game server and don't know what parts are necessary for the amount of players we want to support without investing too much into specifications we don't necessarily need. Like say I hosted a 64-player server of Battlefield or CoD or however the max amount of players are. Would a Core i7 be necessary or would a Dual-Core do the job with the same overall player experience? Would also want to consider other variables: memory, GPU. I realize results would also vary depending on the server location, its speed, and the player's location and speed, too, along with their system's specs.Reply -

cangelini JOSHSKORNI wonder if it's possible and also if it'd be useful to do a test of various server configurations for game hosting. Say for instance we want to build a game server and don't know what parts are necessary for the amount of players we want to support without investing too much into specifications we don't necessarily need. Like say I hosted a 64-player server of Battlefield or CoD or however the max amount of players are. Would a Core i7 be necessary or would a Dual-Core do the job with the same overall player experience? Would also want to consider other variables: memory, GPU. I realize results would also vary depending on the server location, its speed, and the player's location and speed, too, along with their system's specs.Reply

Josh, if you have any ideas on testing, I'm all ears! We're currently working with Intel on server/workstation coverage (AMD has thus far been fairly unreceptive to seeing its Opteron processors tested).

Regards,

Chris -

You could setup a small network with very fast LAN speeds (10Gbps maybe?). You can test ping and responsiveness on the clients, and check CPU/memory usage on the server. Eliminating the bottleneck of the connection and testing many different games with dedicated servers one can actually get a good idea of what is needed to eliminate bottlenecks produced by the hardware itself.Reply

-

Moshu78 Dear Chris,Reply

thank you for the review but your benchmarks prove that you were GPU-bottlenecked almost all time.

Letme explain: i.e. Metro 2033 or Just Cause 2... the Xenon running at 2.4 GHz provided the same FPS as when it ran at 4 GHz. That means your GPU is the bottleneck since the increase in CPU speed therefore the increase in the number of frames sent to the GPU for processing each second does not produce any visible output increase... so the GPU has too much to process already.

I also want to point out that enabling the AA and AF in CPU tests puts additional stress on the GPU therefore bottlenecking the system even more. It should be forbidden to do so... since your goal is to thest the CPU not the GPU.

Please try (and not only you, there is more than 1 article at Tom's) so try to reconsider the testing methodology, what bottleneck means and how can you detect it and so on...

Since the 480 bottlenecked most of the gaming results are useless except for seeing how many FPS does a GF480 provide in games, resolutions and with AA/AF. But that wasn't the point of the article.

LE: missed the text under the graphs... seems you are aware of the issue. :) Still would like to see the CPU tests performed on more GPU muscle or on lower resolutions/older games. This way you'll be able to get to the real interesting part: where/when does the CPU bottleneck? -

Looks to me to be a pointless exercise. I have been running an i7-860 @ 4.05 Ghz and low temps for more than a year now so why pay for a motherboard that expensive plus the chip?Reply

-

Cryio I have a question. Maybe two. First: Since when Just Cause 2 is a DX11 game? I knew it was only DX10/10.1 . And even if it is , what are the differences between the DX10 and 11 versions?Reply -

omoronovo blibbaNote: Higher clocked Xeons are available.Reply

However, I'm sure everyone is aware of how sharply the price of Xeons rise above the lowest-of-the-low. I expect a Xeon capable of 4.5ghz (a good speed to aim for with a 32nm chip and good cooling), you would already be over the costs of purchasing a 970/980x/990x, especially considering how good a motherboard you would need to get - a Rampage III extreme is possibly one of the most expensive X58 boards on the market, offsetting most of the gains you'd get over a 45nm chip and a more wallet friendly board - such as the Gigabyte GA-X58A-UD3R. -

compton This is one of the best articles in some time. I went AMD with the advent of the Phenom IIs despite never owning or using them previously, and I didn't once long going back to Intel for my processor needs. But I think that may have changed with the excellent 32nm products. The 980X might be the cat's pajamas, but $1000 is too much unless you KNOW you need it (like 3x SLI 480s, or actual serious multithreaded workloads when TIME = $$$). The lowly i3 has seriously impressed the hell out of me for value/performance, heat, and price/performance. Now, this Xeon rears it's head. While still pricey in absolute terms, it is still a great value play. Intel has earned my business back with their SSDs -- now might be the time to get back in on their processors, even if Intel's content to keep this chip in the Xeon line. Thanks for the illumination.Reply