PCIe 8.0 spec hits 1 TB/s of bandwidth and has new connector technology — spec hits 0.5V milestone, final ratification expected in 2028

First draft specification is ready.

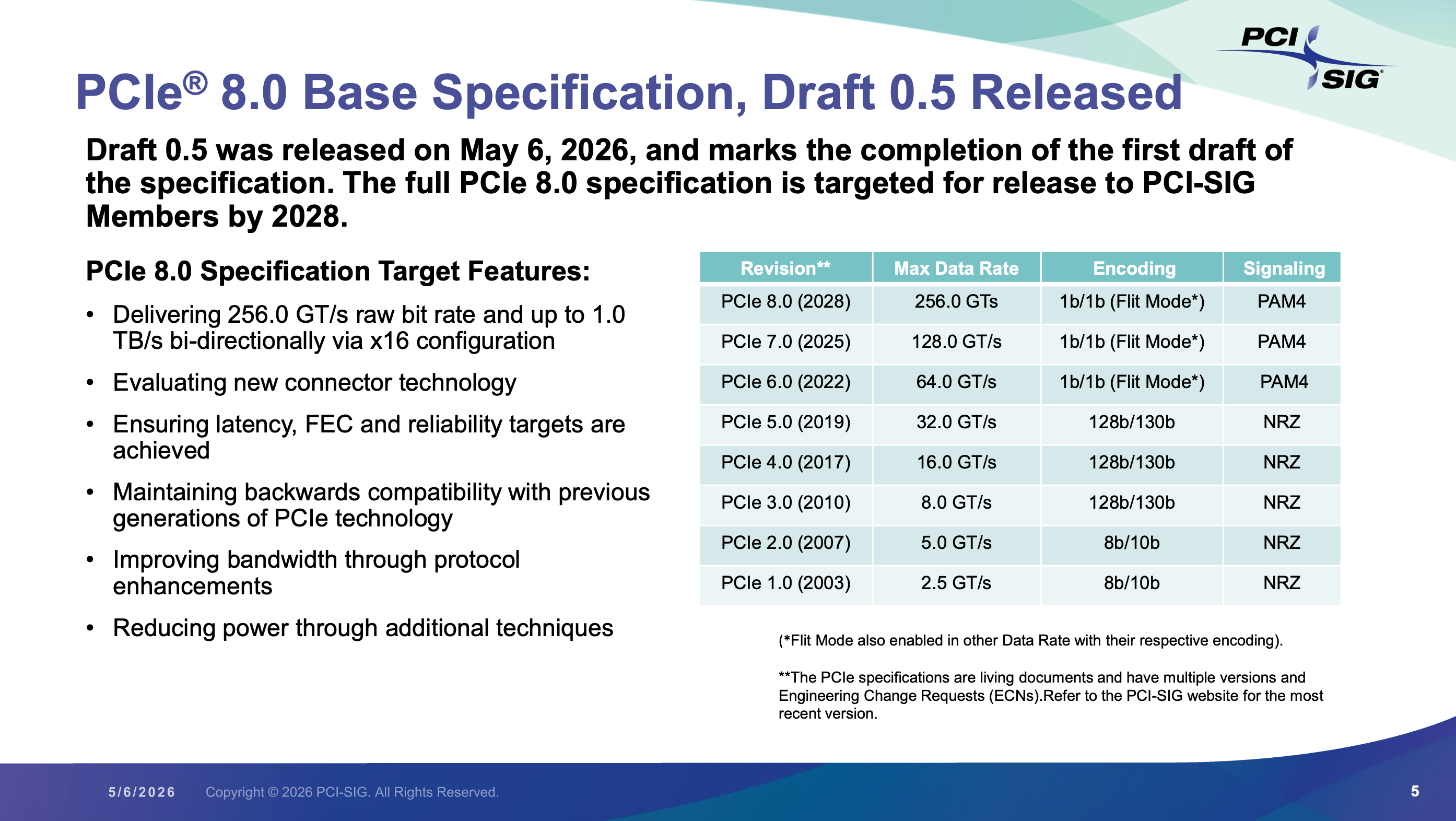

The PCI-SIG, the organization that oversees development of PCIe and adjacent standards, on Wednesday announced the availability of the PCIe 8.0 draft specification version 0.5, a major milestone. The first draft of the specification sets the architectural requirements and is designed to enable PCI-SIG members to start prototyping and submit their final proposals. Version 0.5 of the specification maintains that the transfer rate supported by the new interconnection will reach 256 GT/s, enabling up to 1 TB/s bi-directional bandwidth via an x16 configuration.

Version 0.5 of the PCIe standard is the first full draft of the spec that locks in key conceptual targets and mechanisms, and outlines all major aspects of the architecture, including electrical, logical, compliance, and software. This means that PCI-SIG maintains a target bit rate of 256 GT/s; PAM4 signaling with forward error correction (FEC) and Flit Mode encoding; bandwidth-improving protocol enhancements; backward compatibility; and new connector technology that is now being evaluated. Meanwhile, since version 0.5 is not the final draft and not all parts of the specification are frozen, some electrical parameters and protocol optimizations can be tuned further.

The new release is a major milestone as this is when hardware designers — large companies like AMD, Intel, and Nvidia, as well as IP or PHY vendors — may start early prototyping and architecture work, albeit with contingency plans for possible changes. The most important point is that the specification is mature enough, and development work can begin.

One of the intriguing parts of the announcement is that PCI-SIG continues to evaluate new connector technology, which essentially means the current copper physical layer is getting uncomfortably close to its limits.

Loss budgets, crosstalk, and reflections have become serious constraints for PCIe 5.0 and 6.0, but with PCIe 8.0 and its 256 GT/s bit rate — a data transfer rate no copper-based standard has ever achieved — they are likely going to become a nightmare. At such speeds, the traditional edge connector and motherboard routing may not deliver acceptable signal integrity without excessive power (for equalization) or latency (for FEC). As a result, PCI-SIG might look to redesign PCIe slots with better materials and tighter tolerances, or to shorten electrical paths once again while increasing the number of redrivers per link. In any case, since PCI-SIG wants to maintain backward compatibility, do not expect any drastic changes on the connector level.

With this draft available, the PCIe 8.0 standard continues to progress toward final ratification in 2028.

Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.

-

FunSurfer Seems that PCIe 8.0 will still have some connectivity issues... maybe it is best to wait for the optical fiber PCIe 9.0...Reply -

DS426 I'm guessing it'll get a naming rebrand when it goes to a physical optical bus. Any guesses?Reply -

hotaru251 i'd assume they just adopt a fiber connector that plugs into card while allowing conenctor to work w/o the fiber bit thus allowing compatibility even if at reduced spd.Reply -

Li Ken-un Reply

Every connection introduces noise and signal integrity issues. The adapter itself will certainly degrade the signal.hotaru251 said:a fiber connector that plugs into card while allowing conenctor to work w/o the fiber bit -

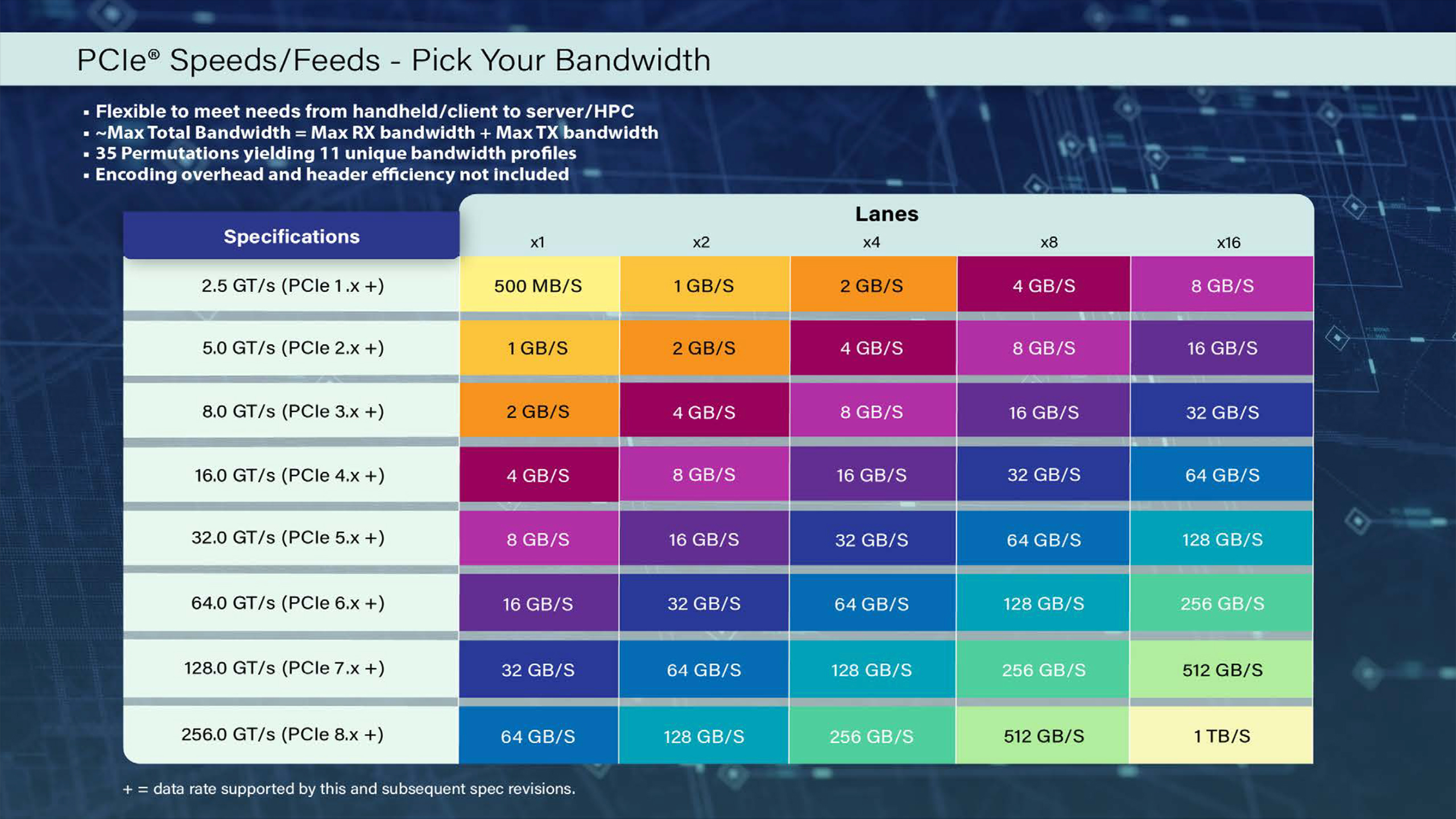

bit_user First, the chart in full-res:Reply

And now we can clearly see that they're doing that ridiculous thing of summing the bandwidth in both directions. I say it's ridiculous, because most workloads are bottlenecked in only one direction, not both. Also, most modern interconnects are bidir (or dual-simplex), so it almost goes without saying that you get the same non-interfering bandwidth in both directions.

Anyway, one aspect I find interesting is how much (uni-dir) bandwidth a single x4 link has. At PCIe 6.0 / CLX 3.0, that number (32 GB/s) starts to become very interesting for using CXL DIMMs. It's starting to approach the number you get with a regular DIMM. -

MobileJAD Reply

Imagine if it were possible to just plug fiber directly into the CPU where its pcie controller is and the other end to whatever you want to connect, like a nvme device.Li Ken-un said:Every connection introduces noise and signal integrity issues. The adapter itself will certainly degrade the signal.

I am of course being somewhat sarcastic about how crazy tech is getting now and understand this stuff is purely going to be in a enterprise environment, but damn does it feel like things are being advanced towards their limits here. -

bit_user Reply

It's coming sooner than you think. Not to your desktop, of course, but in AI servers.MobileJAD said:Imagine if it were possible to just plug fiber directly into the CPU where its pcie controller is and the other end to whatever you want to connect, like a nvme device.

kS8r7UcexJUView: https://www.youtube.com/watch?v=kS8r7UcexJU

More:

https://www.tomshardware.com/networking/nvidia-outlines-plans-for-using-light-for-communication-between-ai-gpus-by-2026-silicon-photonics-and-co-packaged-optics-may-become-mandatory-for-next-gen-ai-data-centers -

Geef Yep, pretty soon it's gonna be plugging yourself in. 🔌:whistle:Reply

Motoko Kusanagi Gif file. -

Jame5 Honestly, I want desktop CPUs to have maybe 4-8 lanes of this. Then it can break out to lower spec PCIe lanes, giving us FAR more lanes than we currently get. Imagine a CPU with 8 lanes of PCIe 8.0, that would give us the equivalent of of 64 lanes of PCIe 5.0 connectivity to play with. Or 128 lanes of PCIe 4.0.Reply

I'm kind of annoyed with 20 lanes of PCIe + however many half-quality lanes from the PCH/southbridge. -

usertests Reply

I won't bet on us ever seeing PCIe 7.0 in desktops, much less 8.0:Jame5 said:Honestly, I want desktop CPUs to have maybe 4-8 lanes of this.

https://www.tomshardware.com/pc-components/ssds/pcie-6-0-ssds-for-pcs-wont-arrive-until-2030-costs-and-complexity-mean-pcie-5-0-ssds-are-here-to-stay-for-some-time"You will not see any PCIe Gen6 until 2030," Kuo said. "PC OEMs have very little interest in PCIe 6.0 right now — they do not even want to talk about it. AMD and Intel do not want to talk about it."

At 16 GT/s (PCIe 4.0), traces can reach up to 11 inches with a 28 dB loss budget, but at 64 GT/s (PCIe 6.0), this drops to 3.4 inches with a 32 dB budget, depending on PCB materials and conditions, according to an Astera Labs presentation.

It sounds like PCIe 6.0 support could be brought alongside AM6 around 2030 (Zen 6 and Zen 7 will be on AM5).